Is model choice the most important thing in getting good results from agents?

For me, the answer is no. The model's contribution is outweighed by the value provided by the harness that drives it. Sure, the model is important, but at the top end they are all similar inside my pipeline.

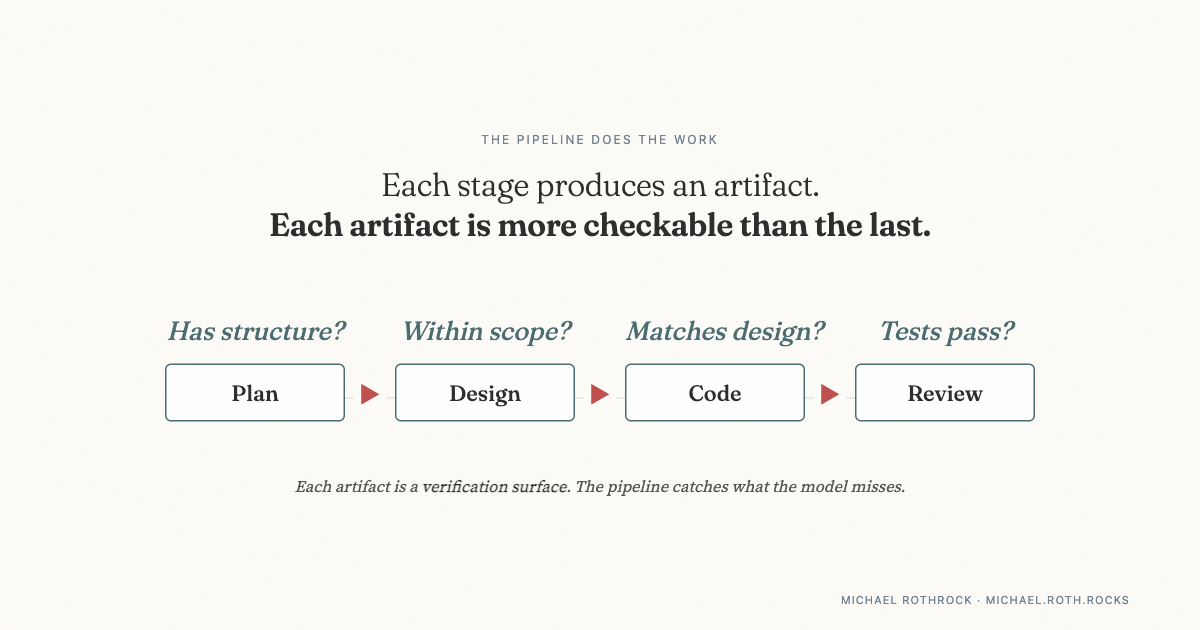

My coding agent goes through a pretty standard software development process: plan, design, code, review. Each stage creates an artifact, and over time I've come to appreciate how important that is. For example, once you have a plan, you can verify if the design is within the boundaries. Once you have a design, you can see if the implementation is over-engineered. By the time you get to code and tests, you have a lot more to grab onto than you had at the prompt.

I don't think of those review steps as process bureaucracy, they are the places where verification becomes possible.

The same pattern appeared when I tested this in a different domain: medical imaging. I ran the same gates across three models of varying quality. The rejection rate scaled cleanly with model quality. Different models, but the pipeline was able to judge the output quality because the workflow produced a verification surface.

I strive to make the stages small, but this decomposition-to-stages approach has limits. There are atomic steps that are "atomic" and cannot be further decomposed. (Because I like to experiment, I tried it at the next-token-prediction level. Didn't work.)

But once the work can be broken into stages, this starts to matter a lot. The industry is spending billions trying to make models smarter. I get why. I just think a lot of teams are leaving reliability on the table because they treat the model as the product and the pipeline as plumbing.

Most of the time when I see one team getting good results from a model and another team getting mediocre results from that same model, I don't think the explanation is the prompt. I think it's everything wrapped around it.

The framework behind this: Trust Topology →